SAFe® Enterprise Subscription

Your organization’s key to accessing SAFe at Scale

We’ve taken everything you know and love about SAFe and created a single, easy-to-manage SAFe® Enterprise subscription that enables you to provide SAFe training and resources to all of your employees.

- Empower your employees

- Exclusive courses

- Access for everyone in your organization

- Enterprise-grade security

Transform Your Company

Become a more responsive, resilient organization

- Follow a proven SAFe roadmap

- Evaluate your enterprise’s agility with online business agility assessments

- Track your organization’s progress towards business agility with a wide variety of interactive tools and dashboards

Determine how you’re doing and how to improve with business agility assessments and core competency assessments. Achieve early wins and long-term gains by building on the foundation of the SAFe implementation roadmap, your step-by-step guide to create real change.

Empower Your Employees

Give your employees the tools to drive success

- Attend unlimited SAFe courses

- Quickly get new teams up to speed with SAFe Jumpstart, and empower trainers to assign individual learning plans and track progress

- Manage your organization’s certifications in one convenient location

- Ask questions in community forums to learn from other SAFe professionals around the world

- Gain access to exclusive courses to engage more areas of your organization

Grow and retain talent by ensuring that your employees are up-to-date with their certifications and professional development. Connect your employees to a wealth of SAFe knowledge and real-world application with community forums. Get unlimited access to virtual learning so your employees can train from anywhere.

For marketing teams

Teach marketing teams how to use Lean, Agile, and SAFe practices and principles. This course was designed to guide the implementation of SAFe and Agile practices for marketing.

For Enterprise Leaders

Equip enterprise leaders with skills to navigate change, empower teams, manage risk, build engaging company culture, and respond to threats and opportunities.

Facilitate Daily Learning

Embed effective practices into your day-to-day

- Facilitate key SAFe events from anywhere, such as Inspect & Adapt and iteration retrospectives

- Organize virtual classrooms that integrate with SAFe courses and include interactive activities

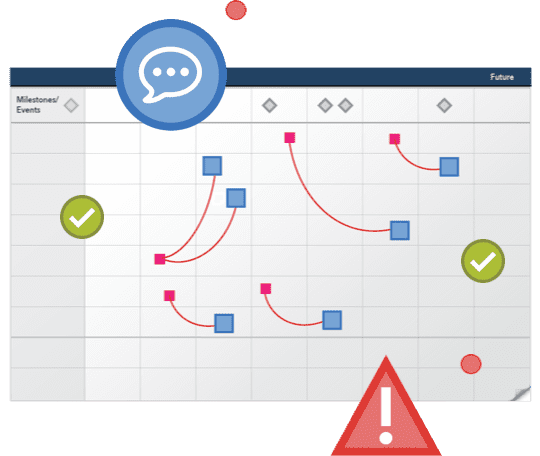

- Invent, prioritize, and engage teams worldwide with SAFe Studio™

Run virtual PI planning, participatory budgeting, and other key SAFe events and activities in real-time and across timezones with SAFe Studio, a cloud-based visual workspace. Engage members in remote training and learning with SAFe virtual classroom.